This is a pre-release of oMLX 0.3.0. It will go through 1 day of testing before the official release. If you find any bugs, please report them on the issues page!

Highlights

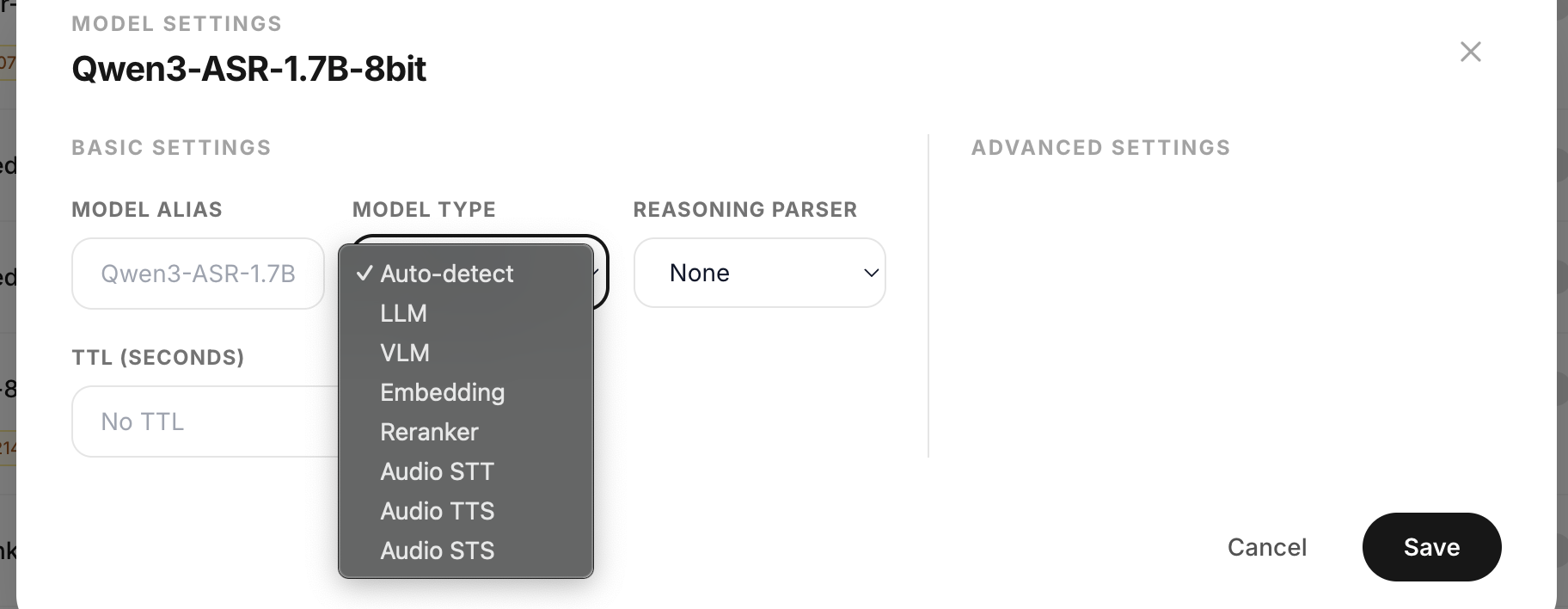

Audio support — STT, TTS, STS

mlx-audio integration brings three new engine types for audio models on Apple Silicon. by @ethannortharc

- STT (Speech-to-Text): Whisper, Qwen3-ASR, Parakeet, Voxtral

- TTS (Text-to-Speech): Qwen3-TTS, Kokoro, F5-TTS, Sesame CSM, Dia, Spark, CosyVoice

- STS (Speech-to-Speech): DeepFilterNet, MossFormer2, SAMAudio, LFM2.5-Audio

Three new OpenAI-compatible endpoints: /v1/audio/transcriptions, /v1/audio/speech, /v1/audio/process (oMLX-specific). Audio models are automatically detected from the mlx-audio registry and show up in the admin dashboard alongside LLM/VLM models.

Optional dependency — pip install 'omlx[audio]'. Homebrew and DMG builds include mlx-audio by default. (#365)

XGrammar constrained decoding

xgrammar-based structured output generation by @leuski. Enforces grammar constraints at the logit level using bitmasks, running in parallel with the model forward pass via mx.async_eval.

Supported grammar types:

json— JSON schema validationregex— regular expression patternsgrammar— EBNF/GBNF grammarschoice— allowed string lists

Uses vLLM-compatible structured_outputs field (in extra_body). Per-model reasoning_parser config maps xgrammar's structural tags to model protocols (Qwen, Harmony, DeepSeek, Llama, etc.). Performance overhead is 9–24% on decode (no TTFT impact), decreasing with larger models.

Optional dependency — pip install 'omlx[grammar]'. Homebrew and DMG builds include xgrammar by default. Without it, response_format falls back to prompt injection and structured_outputs returns a 400 with install instructions. (#335)

New Features

- XTC sampler — XTC (eXclude Top Choices) sampling support. Pass

xtc_probabilityandxtc_thresholdthrough any API endpoint. Defaults to 0.0 (disabled) (#337 by @blightbow) - MCP Streamable HTTP — MCP now supports Streamable HTTP transport in addition to stdio (#286 by @tianfeng98)

- Multimodal embedding items —

/v1/embeddingsaccepts structureditemswith text + image input. Tested withQwen3-VL-Embedding-2B-mxfp8(#373 by @MasakiMu319) - Custom processor embedding support — Embedding requests route through custom processor hooks when available, fixing models like Qwen3-VL-Embedding that broke on the generic tokenizer path (#369 by @MasakiMu319)

- System prompt support in chat UI — Chat interface now accepts system prompts

- Clear all SSD cache button — Admin dashboard has a new button to clear all SSD cache blocks

- SSD cache size display — Shows SSD cache size even when no models are loaded

- Responsive admin dashboard — Admin dashboard now works on mobile devices

- Real-time menubar updates — macOS menubar status (Stopped → Starting → Running) and button states now update in real time while the menu is open, without needing to close and reopen (#426 by @EmotionalAmo)

- App bundle size reduction — Stripped torch, cv2, pyarrow, pandas, sympy from the app bundle (~780MB saved)

- mlx-vlm bump — Updated to v0.4.2 (7f7a04c)

- mlx-embeddings bump — Updated to v0.1.0 (32981fa)

Bug Fixes

- Fix

ContentPartlist content causing 500 error when Qwen Code CLI sends[{"type": "text", ...}]instead of string (#433 by @mbauer) - Fix prefix index storing mutable reference to

block_ids, causing cache corruption on CoW block changes (#391 by @andafterall) - Fix Anthropic

documentcontent blocks (base64 PDF) rejected by Pydantic validation (#434) - Fix "Total Tokens Processed" metric ignoring reasoning/thinking tokens — renamed to "Total Prefill Tokens" for clarity (#430)

- Fix MCP client resource leak on partial connect failure

- Fix

ValueErrorfromprocessor.apply_chat_templatein VLM engine - Fix fake

<think>tag prepended in TRACE log output - Fix VLM cache proxy using wrong offset for batched mask sizing

- Fix

specprefill_thresholdnot propagating from model_settings to scheduler - Fix

max_position_embeddingsnot detected in nestedtext_config - Fix

voiceparam not mapped toinstructfor VoiceDesign TTS models - Fix 8-bit quantization inconsistency — unified to affine/gs64 (removed mxfp8/gs32)

- Fix admin dashboard polling continuing when browser tab is hidden (#352)

- Fix

_prefix_indextype annotations to match tuple storage - Fix menubar menu status duplication and

_build_menuguard during menu open - Fix mlx-audio dependency conflicts with mlx-lm version pinning

- Fix auto-generate README when frontmatter-only stub exists

- Sync 25 stale tests with current implementation

New Contributors

- @ethannortharc — Audio integration (STT/TTS/STS) (#365)

- @leuski — XGrammar constrained decoding (#335)

- @tianfeng98 — MCP Streamable HTTP (#286)

- @MasakiMu319 — Multimodal embedding items (#373, #369)

- @mbauer — ContentPart list type error fix (#433)

- @andafterall — Prefix index mutable reference fix (#391)

- @EmotionalAmo — Menubar real-time update (#426)

Full changelog: v0.2.24...v0.3.0rc1