AirLLM

Handle:

airllm

URL: http://localhost:33981

Quickstart |

Configurations |

MacOS |

Example notebooks |

FAQ

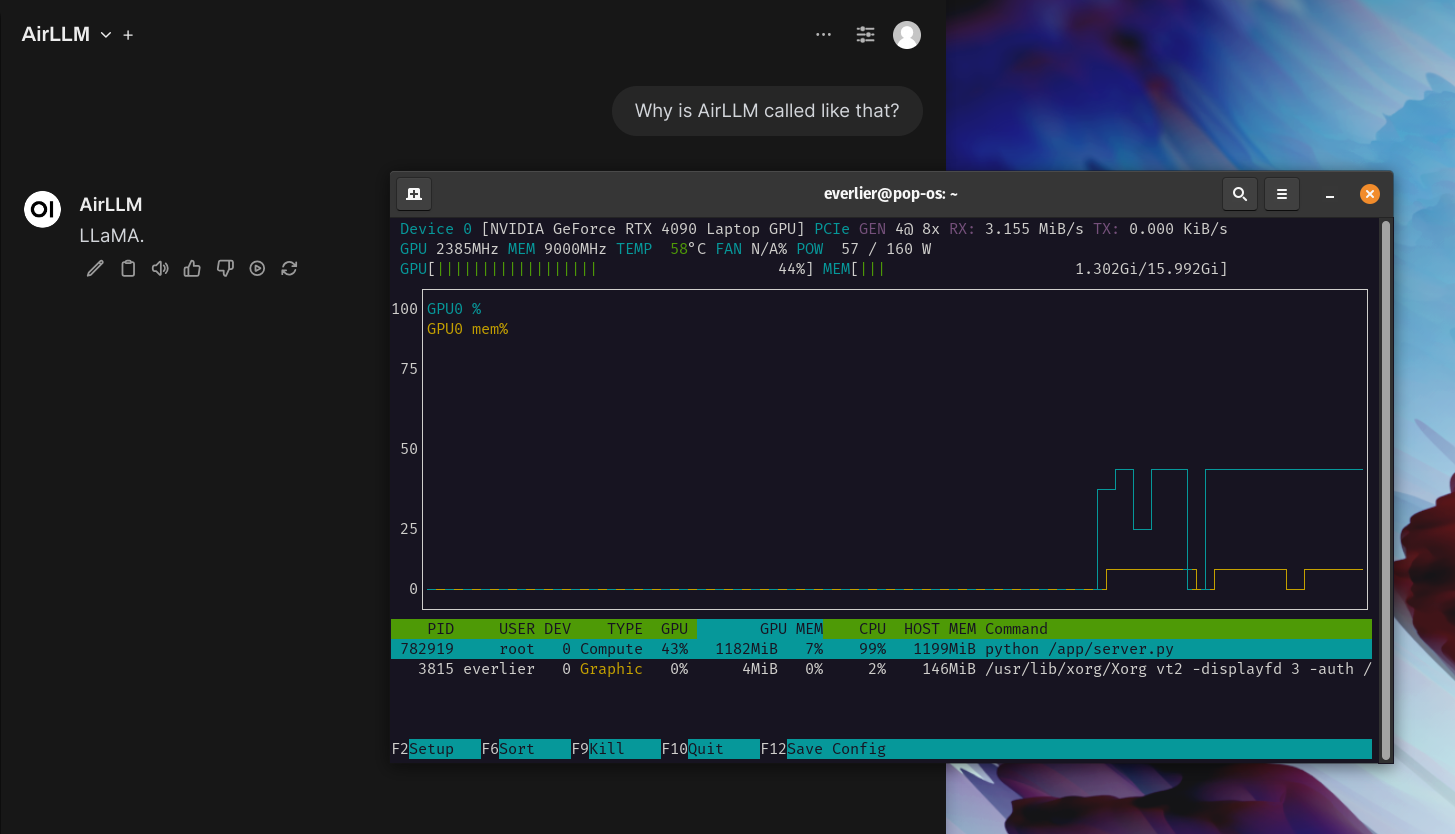

AirLLM optimizes inference memory usage, allowing 70B large language models to run inference on a single 4GB GPU card without quantization, distillation and pruning. And you can run 405B Llama3.1 on 8GB vram now.

Note that above is true, but don't expect a performant inference. AirLLM loads LLM layers into memory in small groups. The main benefit is that it allows a "transformers"-like workflow for models that are much much larger than your VRAM.

Note

AirLLM requires a GPU with CUDA by default, can't be run on CPU.

Starting

# [Optional] Pre-build the image

# Needs PyTorch and CUDA, so will be quite large

harbor build airllm

# Start the service

# Will download selected models if not present yet

harbor up airllmFor funsies, Harbor implements an OpenAI-compatible server for AirLLM, so that you can connect it to the Open WebUI and... wait... wait... wait... and then get an amazing response from a previously unreachable model.

Full Changelog: v0.1.3...v0.1.4